My Oura Ring Says Everything Will Be Fine

We are being made competent. We are being made better. We are being made.

I was sitting on BART when my phone buzzed.

What was the time? Well before 6am, and I sat bleary-eyed beneath the harsh white light of the commuter train, wondering how the hell I was going to survive ten hours of tech work.

I’d risen early for a workout—very early, in fact—before scrambling to catch the train to make an early meeting. Now, wedged into a corner seat on the Orange Line, I headed toward the Bay’s southern sprawl, where sloughs and salt flats abut business parks, the prune orchards of Santa Clara Valley cut down long ago, replaced by the monocrop of office space. Grim thoughts filled my groggy mind; already the lack of sleep was getting to me.

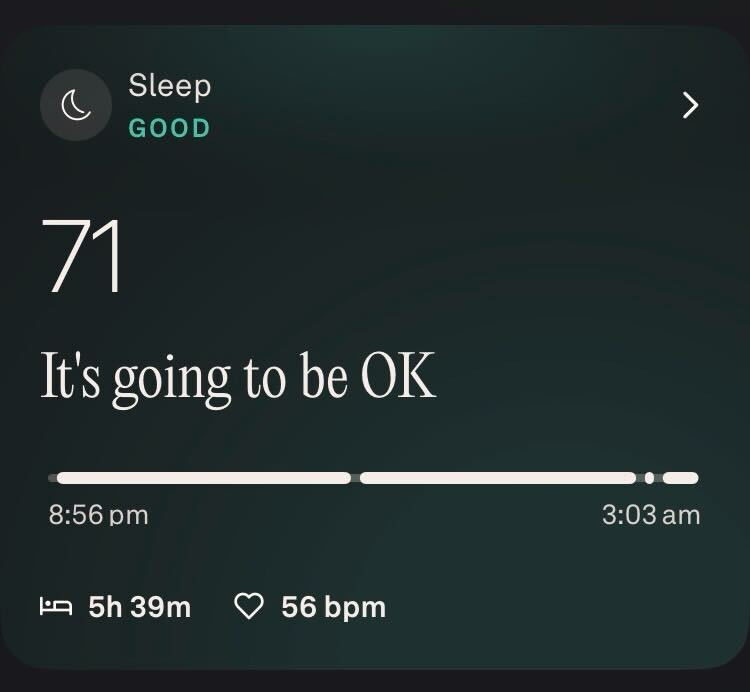

That’s when my phone buzzed and told me it was going to be okay.

It was not a person trying to reach me, but rather an app. Specifically, the app connected to the Oura ring resting upon the index finger of my left hand.

If you’re wondering why a mobile app sounds like Robin Williams in Good Will Hunting, here’s some context: the Oura ring is a sensing device placed on your finger to generate personalized health information gleaned from your heart rate, oxygen levels, and movement during sleep and activity.

Originally launched on Kickstarter in 2015, the ring’s adoption has accelerated since the pandemic. In December of 2024, it pocketed $200M in a series D funding round. By October of 2025, it had raised $900M and sold 5.5 million rings—3 million of those sales occurring in 2025 alone. Now worth $11 billion, Oura has quickly gone from niche wellness gadget to platform with millions of users.1

Oura’s growth is part of a larger trend. Other companies are angling to capture our bodies’ data. Apple Watch, Samsung’s Galaxy Ring, and the Whoop strap compete in the same space—building products to capture our biological data and push it back at us as health guidance.

Oura, however, has an advantage in both market share and vibes. It’s now a fashion accessory, especially among younger women who can use the ring to track cycle phases and fertility. A well-written Cosmopolitan review from Hannah Oh notes how, when stacked with a cheap Pavoi band, the Oura ring offers “a shockingly luxe look.”

What, exactly, is going on here? Specifically, why am I, and millions of others, allowing private companies to access our deepest, most personal information—and paying them for the privilege?

What’s made us so keen to trade away the literal pulses of our lives? How is that exchange changing our behavior? And by measuring us, what is the ring training us to become?

The Alchemist in the Machine

Understanding the invisible processes within the human body is an ancient desire. “Know thyself,” goes the maxim inscribed upon the Temple of Apollo in Delphi. Western medical practitioners have long followed this injunction.

Classical physicians like Galen theorized hidden pathologies within the humors could be diagnosed by recognizing outward disturbances of muscle and skin. Medievals like Avicenna treated the body’s surface as an instrument, developing percussive techniques to diagnose pregnancy or obstruction.

Paracelsus, a 16th-century Swiss alchemist physician, imagined that “signatures” on the surface of bodies could reveal hidden processes within, accessible through the “Light of Nature.”2 Into the 19th century, mesmerists, occultists, and cranks experimented with “animal magnetism” and the human aura to detect invisible vital forces of the body.

Oura updates the Paracelsian Light of Nature for the biometric age: an array of LEDs on the ring’s inner side flash into your skin—much like an oxygen reader clipped to your finger during a surgical procedure. Meanwhile, temperature and accelerometer sensors detect movement and other trends as you sleep or go about your day.

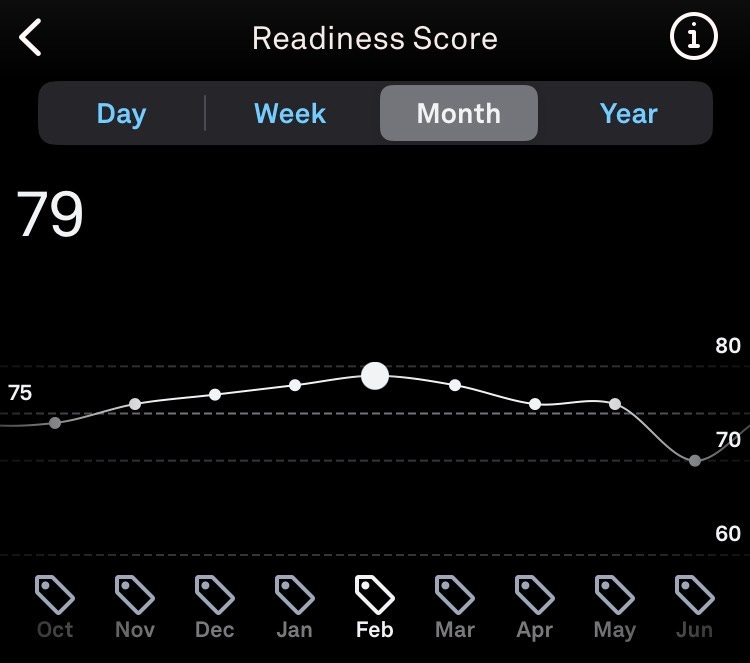

These measurements sync with an app on your phone that translates the raw sensor data into insights—scrolls of pixelated graphs, charts, and trend lines.

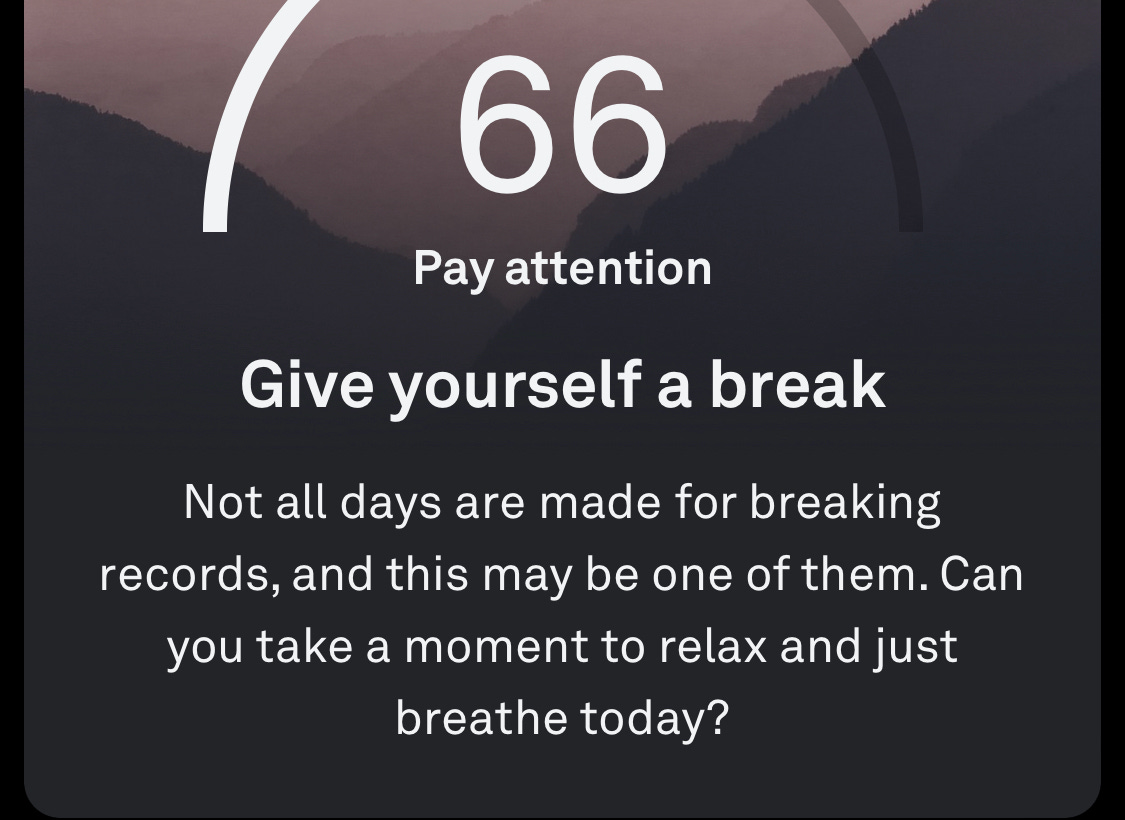

These ratings, covering a dozen odd contributing factors like sleep efficiency, body temperature, activity levels, and resting heart rate, contribute to a gestalt score called “readiness.”

Readiness for what, exactly?

It’s hard to say. Oura markets itself as “part of a health journey for millions of users.”3 It’s ring is “cutting-edge sensing technology” that helps the company “pursue original discoveries that deepen the understanding of human health.” By collecting “deeply personal health metrics and insights,” the ring helps “make your health your priority.”4

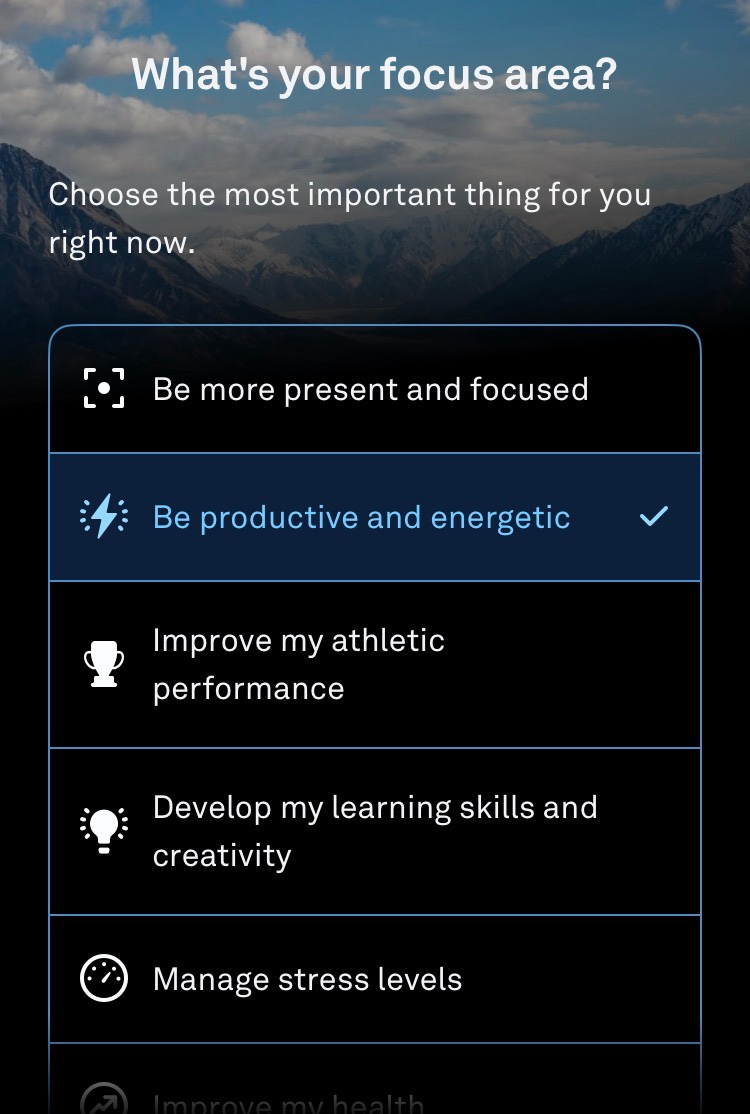

While that makes for good verbiage in a quarterly earnings report, it isn’t exactly an illuminating ethos. Of course, Oura’s executives, designers, and product teams know people have different goals. The app prompts you to manually input your priorities to tune its recommendations.

That sounds nice until you find your goals don’t fit within these pre-baked options. Or what if you have multiple conflicting goals? You might be like me, trying to cultivate a bit of creativity by waking up super early to write before going on a morning run. Like so much of human endeavor, our goals are often in tension.

Moreover, can the platform’s sensors and algorithms truly weigh the shifting, contingent, contextual, contradictory, and messy priorities that make up what it means to be human? Can it really make sense of our bodies? Can it pull signal from the beautiful, awful, unpredictable asymmetry of our physical selves?

Nevertheless, the promise of self-knowledge abounds within the app. As the alchemists of old, Oura promises that our once-invisible interior—recovery, stress, strain, performance—can now be made legible and continuously inspected.

Solving the Human Equation

Contemplating this made me think of a recent piece from the writer Chris Arnade that highlights a hubristic ambition that still motivates many tech companies: the lingering Enlightenment-era misconception that given enough time and enough resources, eventually everything can be understood:

“Humans became imbued with an unjustified pride that can only end in defeat, while they continually kick the intellectual can down the road—one more variable! one more subatomic particle! one more curled-up spatial dimension!”

The latest version of the materialist utopia—where we are just one more dataset, one more model improvement before solving the world’s problems and finally answered the unanswerable questions—is now held out by the fiercest advocates of artificial intelligence.

To be fair, Oura is not promising to solve the Big Questions about the meaning of life. But it is promising you can “Get the best sleep of your life,” take the guess work out of trying to conceive, and “paint a truly holistic picture of your health.” Such claims sketch along the same axis of ambition currently funding the AI bubble—that super intelligence, among other things, can finally realize the Paracelsian promise of rendering the invisible visible.

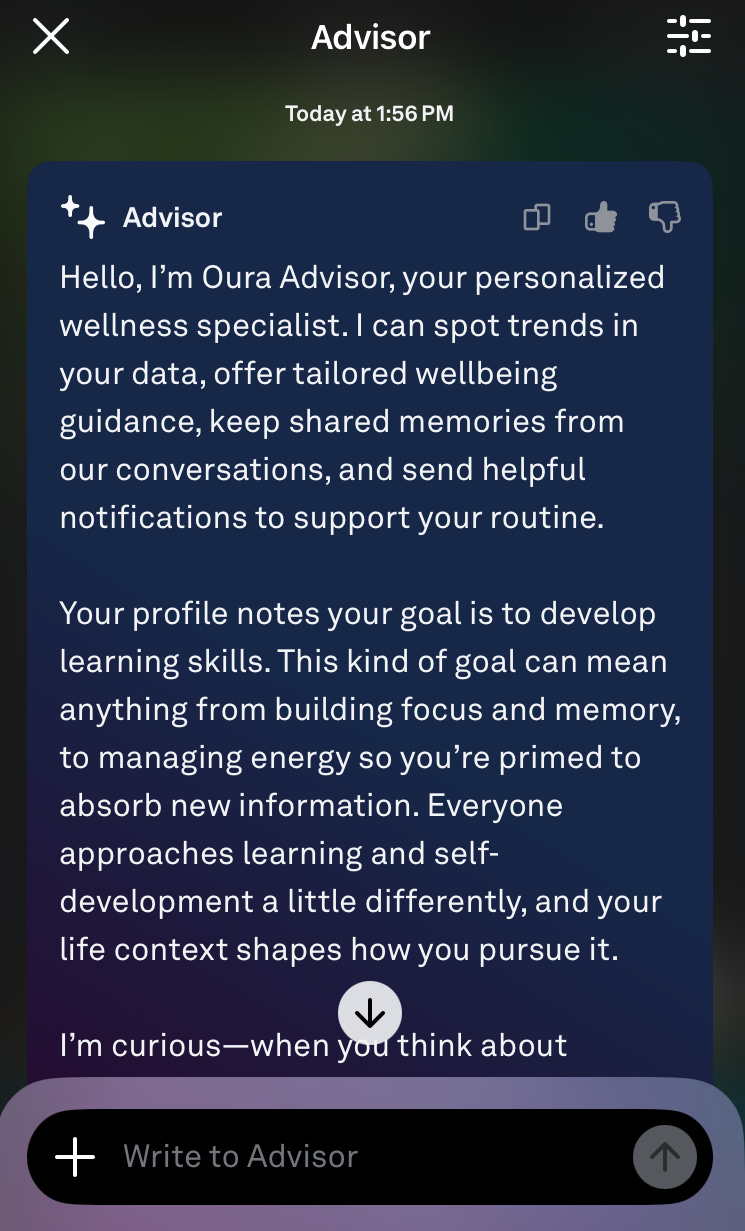

Indeed, Oura now leverages generative AI models to translate biometric data into personalized insights. A recent update to the app released a keynote AI feature, Advisor, a conversational interface for tailored guidance. Other parts of the app also became more wordy, likely with model-based text. Content proliferates: every insight tappable into longer read-outs; menus of trends and reports laden with chunks of upbeat copy, personalized to your data.

Candidly, I’ve found this AI-powered redesign effective.

I check the app more often, partly because it has more to tell, and also because the insights feel more focused, reinforcing when I’m feeling off, nudging me to rest when I might otherwise power through burgeoning illness or injury.

But what I find interesting is how the improved content supports the platform’s unique position at the intersection of both physical and psychological attention. Oura helps us discipline our physiology by guiding movement, rest, and exercise, while also influencing our focus and agency.

Many apps try to capture your attention. Fewer try to capture the very movement of your body.

Feedback Loops of the Self

While writing this essay, I remembered the writing of the philosopher Louis Althusser, who I read in grad school for a fabulous seminar led by Victoria Kahn. Althusser argued that modern subjects are formed through ideologically driven institutions like the family, school, media, or church.

These institutions instill within us that certain norms—like working obediently at a desk or factory floor, obeying traffic signs, or saving to buy a house—are not only normal, but the natural course of things. It’s a process he calls “interpellation,” making you feel like you are acting out of free will, even though you’re really following a social script written to sustain existing relationships of power.5

I think the concept of interpellation helps clarify that platforms like Oura don’t just inform us; they actively train us to view ourselves as data streams to be optimized, rather than just persons living our lives. When the phone buzzes in my pocket advising recovery, or when it tells me to stretch my legs, or when it tells me bedtime is approaching, I hold myself accountable to its judgments. In so doing, I fold my body and consciousness into its continuous circuit of measurement, evaluation, and self-correction.

OK, so what? I suppose the larger question is that as we let data-derived optimization drive more of our behavior, to what end shall we deploy our optimized minds and bodies? In whose vision of the good life will we be rendered?

My hunch is that apps like Oura make us better able to participate in an economy that creates more apps. Our current biometric tracking craze, made increasingly efficacious through generative AI, is the knowledge economy conditioning us as both producers and consumers to keep building the knowledge economy.

Health tracking apps make us more fit to write copy. To design the pixels that define the UI. To lead scrum teams. To write code (or train the agents that write code). To post on Substack. To post on TikTok. To fine-tune the LLMs that hydrate our phones with rich, personalized content.

Today, my readiness score was 85—“optimal” according to my Oura ring. Again, I ask: readiness for what? Perhaps best not to think about it too much. We are being made competent. We are being made better. We are being made.

It’s going to be OK.

Paracelsus, “Concerning the Signature of Natural Things,” in The Hermetic and Alchemical Writings of Paracelsus, trans. and ed. Arthur Edward Waite, vol. 1 (London: James Elliott, 1894), in the treatise Concerning the Nature of Things, Book IX, 188-190.

https://ouraring.com/about-us

https://ouraring.com/science-and-research

Louis Althusser, “Ideology and Ideological State Apparatuses (Notes towards an Investigation),” in Lenin and Philosophy and Other Essays, translated by Ben Brewster, (New York: Monthly Review Press), 121–176.

I recently read the simplest statement: If you’re waking to an alarm, by definition you’re not getting enough sleep.

Excellent post on the Oura ring and the background. I do think I pay more attention to those messages I get on the app than I should. I try to keep it simple and focus on a few items on a regular basis. When I first started getting messages from the Advisor, I did not know what to think. Should I respond? How? I felt unnerved by some of the questions. For those who are interested, I wrote my own year-end review of the ring and how it has impacted my day to day life and my training. Here is the link: https://open.substack.com/pub/jennwoltjen/p/does-wearing-a-health-ring-make-me?r=19b5er&utm_campaign=post&utm_medium=web